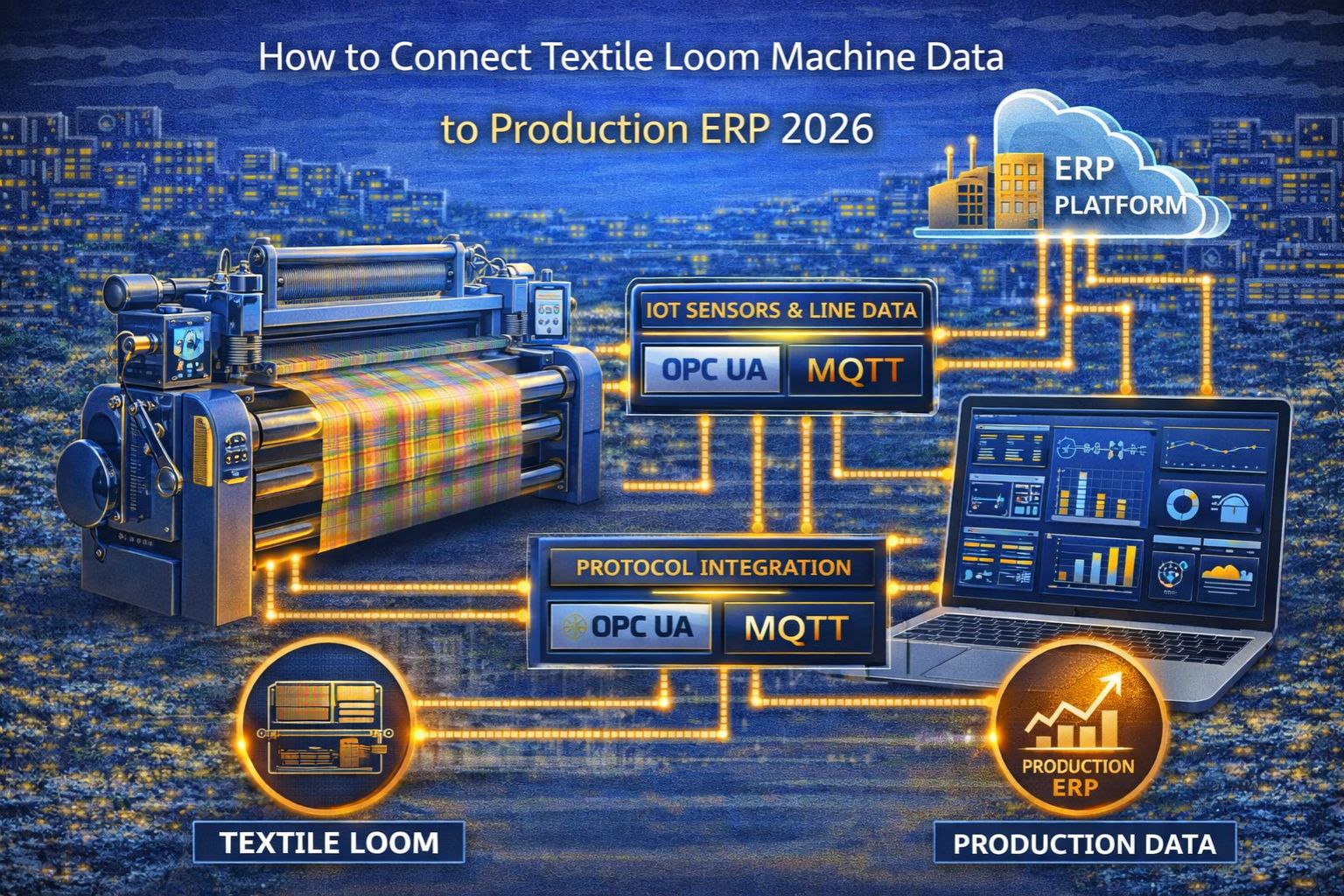

how to connect textile loom machine data to production ERP 2026

how to connect textile loom machine data to production ERP 2026

So, connecting textile loom data to a production ERP in 2026. Honestly, it's less about finding a connector and more about navigating the industrial protocol timeouts and data structure mismatches that just silently corrupt your production counts and efficiency metrics before the data even gets to the business layer. The real core challenge isn't the connection itself—it's making sure the real-time data from those legacy PLCs and new IoT sensors keeps its context and integrity when it crosses from the operational floor to the IT system. That's the OT-to-IT boundary, and it's where most internal projects fail, often because of something as technical but critical as unmanaged packet buffering at the gateway.

What Loom Data Integration Actually Means for Production Floors

In a real textile mill, "integration" means you're streaming metrics—pick rate, stoppage codes, warp tension, weft breaks—from maybe dozens of looms into the ERP's production module. But you have to do it without creating a 15-minute data lag that makes your live dashboards pointless. Teams often assume a simple OPC UA or MQTT bridge will just work. They ignore how proprietary machine controllers batch diagnostic data in these non-standard intervals, which causes timestamp mismatches the ERP can't reconcile. And then you get inaccurate roll-ups of how much yardage was actually produced.

The Reality of Live Data Flow at Industrial Scale

When you scale up from a single loom to a full production line, the data volume from vibration sensors and quality cameras can completely overwhelm a basic IoT gateway. You know, the kind that wasn't designed for sustained high-frequency polling. The resulting latency isn't just a minor delay; it creates a cascading failure. Suddenly, the ERP's scheduling module is making decisions based on stale data. It might issue work orders for machines that are already down for maintenance, or adjust speeds based on efficiency readings that are hours old.

The Critical Mistake in Assuming Protocol Translation is Enough

This is the most common failure pattern: treating the whole thing as just a simple protocol translation problem. Like, converting Modbus TCP register reads into REST API calls and calling it a day. That approach misses the entire semantic layer. Think about it—a loom's "stop reason" code in a PLC register map doesn't automatically map to the ERP's predefined downtime categories, like "mechanical" or "thread break." Without that contextual translation, every single stoppage just gets logged as "unscheduled downtime." It destroys the data's value for root cause analysis and creates a major protocol health audit blind spot.

When to Tune, Reconfigure, or Redesign the Data Pipeline

Figuring out the right path is tough, but the decision boundary is actually pretty clear. You can probably just tune gateway timeouts and buffer sizes if your latency is under 30 seconds and your data mappings are, say, 95% accurate. But you have to reconfigure the entire semantic translation layer if your stoppage and efficiency data is consistently misclassified. However, when you're dealing with mixed-vintage looms where protocol instability causes daily data dropouts? Internal fixes usually aren't enough. At that point, you need a redesign using a deterministic data engine that handles protocol normalization and context preservation to get reliable integration. That's the scenario where platforms like snipcol end up providing the necessary architectural stability.

FAQ

Question: What is the first step to connect an old textile loom to a modern ERP?

Answer: Honestly, the first step is a thorough physical and protocol audit. You need to identify the controller type (it's often a legacy PLC), find the available data ports, and pin down the exact industrial protocol—like Modbus RTU or Profibus. Then, before you buy any hardware, map the critical machine registers (speed, stops, counters) to the specific production KPIs your ERP actually needs.

Question: Why does my loom data show up in the ERP but the production numbers are always wrong?

Answer: This is almost always a data context or unit mismatch. The loom might output picks per minute, but the ERP expects meters woven per hour. Or, machine stoppage events aren't being filtered from the runtime counter, so the ERP is summing stopped time as productive time. The raw data is arriving, but its meaning is getting completely lost in translation.

Question: How do I handle real-time data from 50+ looms without overloading the network or ERP?

Answer: You have to implement edge aggregation. Use a robust industrial gateway or edge device at each line to pre-process the data—calculating hourly averages, bundling events, and only sending summarized, context-rich packets to the ERP at defined intervals. You can't just send a raw firehose of sensor readings; that'll cripple the ingestion layer.

Question: When is it time to abandon our in-house integration script and look for a dedicated solution?

Answer: It's time when you're spending more time each week troubleshooting data gaps, correcting mis-mapped values, and managing gateway reboots than you are actually gaining insights from the data. That operational burden is a clear signal. It means the integration's complexity has surpassed what your internal scripts can handle, and you need a deterministic IT/OT integration foundation.

Comments

Post a Comment