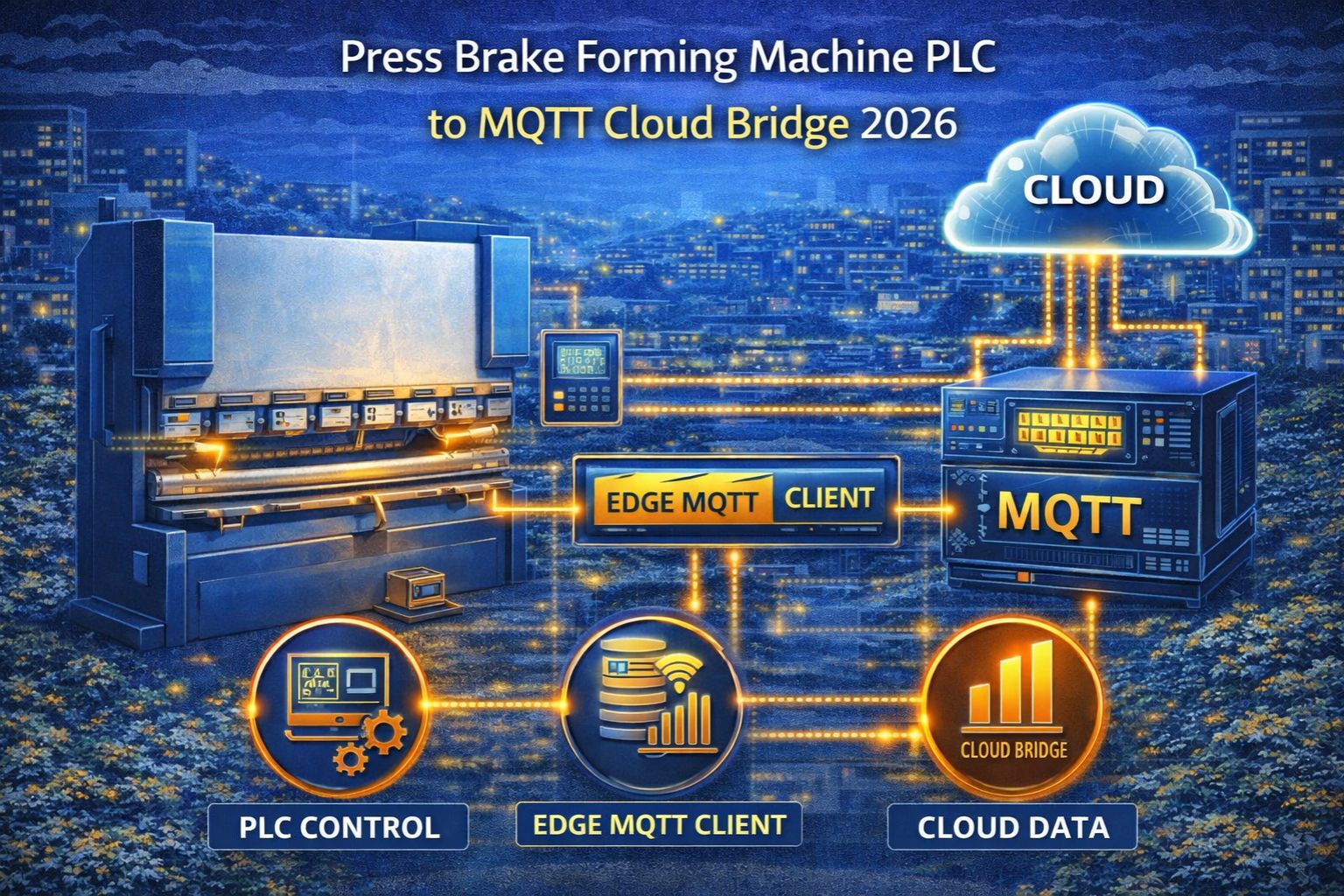

press brake forming machine PLC to MQTT cloud bridge 2026

press brake forming machine PLC to MQTT cloud bridge 2026

Connecting a press brake's PLC to an MQTT cloud bridge is a standard digitalization move now, but honestly, the hard part isn't the connection itself. It's the hidden timing mismatch. You've got these high-speed, deterministic machine cycles running up against the asynchronous, best-effort nature of cloud telemetry. That mismatch just silently eats away at your operational visibility, and you might not even notice at first.

Clarity: What a PLC-to-MQTT Bridge Actually Means for Press Brakes

For a press brake, this setup usually means a gateway reads cyclic I/O or register data from the PLC—stuff like bend angle, tonnage, cycle count—and shoots it out as JSON to an MQTT broker. Here's the operational detail teams tend to miss: the PLC's scan cycle, which runs the machine logic, isn't synced up at all with the gateway's polling rate or the MQTT publish interval. That creates an inherent data drift, right from the very first packet. They're just operating on different clocks.

Reality Check: Data Flow Under Live Production Pressure

In live production, a press brake might complete a bend every few seconds. The bridge has to capture each cycle's data without buffering or losing it. There's a common misunderstanding that "real-time" cloud data means immediate. It doesn't. In reality, you've got network hops, TLS handshakes for secure MQTT, broker queuing—all of it introduces latency spikes. A critical alert for tool wear or misalignment can get delayed, sometimes until after you've already produced a handful of faulty parts.

Mistake: Assuming MQTT QoS Guarantees Machine-State Fidelity

Here's a critical failure pattern: relying solely on MQTT's Quality of Service (QoS) levels to ensure data delivery. Look, QoS can guarantee a message reaches the broker, sure. But it does absolutely nothing to guarantee the *timeliness* or the *contextual accuracy* of that PLC data snapshot. If the gateway happens to poll the PLC right in the middle of a register update, or just after an emergency stop, the cloud gets a data point that's technically correct but totally misleading operationally. That's how you end up with incorrect analytics and maintenance triggers that are just... off.

Decision Help: When to Tune, Reconfigure, or Redesign the Bridge

The decision line is pretty clear, in my view. You can *tune* polling rates and MQTT topics if your latency is under 150ms and the data is just for monitoring. You need to *reconfigure* with local buffering and state-tracking if you're doing fault diagnosis or OEE calculation. But when those internal fixes stop working—specifically when you need sub-second, cycle-accurate data for closed-loop control or predictive quality verification—then you're looking at a redesign. You'll need deterministic industrial protocols at the edge before that bridge can ever be called reliable.

FAQ

Question: What is the biggest risk in bridging a press brake PLC to MQTT?

Answer: The biggest risk is data asynchronicity. It causes the cloud dashboard to show a machine state that's several cycles old. Then operators end up making decisions based on outdated info, especially during fast production runs. It's a real problem.

Question: Can MQTT handle the high-speed data from a forming machine?

Answer: It can handle the data volume, technically. But it can't handle the deterministic timing. The protocol itself adds variable latency, so it's just not suitable for time-critical control signals unless you've built a really robust edge architecture to manage the timing separately.

Question: How do I know if my bridge latency is causing problems?

Answer: You'll see problems show up as discrepancies. Like the physical machine counter won't match the cloud-reported production count. Or alerts for events—say, a tool crash—will pop up in the cloud log way after they actually happened on the shop floor.

Question: When should we consider a more advanced solution than a standard MQTT bridge?

Answer: Consider it when you need to correlate press brake data with upstream or downstream station data for full workflow analysis. Or when your business case genuinely depends on accurate, cycle-by-cycle data for quality compliance. That's the point where foundational protocol integration, which is what specialists like snipcol focus on, becomes absolutely critical.

Comments

Post a Comment